Race Report – Avalon 50K

Off the wind on this heading lie the Marquesas

We got eighty feet of the waterline nicely making way

In a noisy bar in Avalon I tried to call you

But on a midnight watch I realized why twice you ran away

From “Southern Cross” – Crosby, Stills & Nash

The sun was setting over Avalon

The last time we stood in the west

From the album “Avalon Sunset” by Van Morrison

I had never been to Avalon before although I have been interested in visiting for many years. The Avalon 50K seemed like a great reason to finally go, and of course a great way to see the island. 32 mile of a winding race course ensures that you see much more than the casual tourist.

We flew into LAX and took a Lyft down to Long Beach to catch the Catalina Express Ferry with a lot of other runners for the one hour and twenty minute sail over to Avalon. The harbor in Avalon is compact, filled with a variety of boats bobbing in the water. We were there in the offseason, so other than the runners and crew there wasn’t much going on. We made our way to the Edgewater Hotel, which true to its name was right on the main street facing the harbor. The location was perfect as the race start/finish were literally at the front door of the hotel. This would come in handy the next morning when it was raining at the start.

When we wandered through town to packet pickup, we noticed a flurry of unusual activity…sandbagging the doors of the shops in town in anticipation of a big storm rolling through. The race director called a special mandatory briefing to discuss the potential implications of the weather, course changes as a result and to generally discuss the character building nature of an endurance run in these conditions. Armed with that information we found a little family owned Italian restaurant that looked like it was unchanged from the 50’s, had a meal and went in to get prepped for the day ahead.

Race day dawned wet and windy, as expected, so we huddled under the awning about 10 feet from the start line bundled in rain jackets, headlamps on as it was still pitch dark. We took off through the streets of Avalon and started climbing right away, and very quickly the rain subsided so we peeled of the rain layer and went back to work. It wasn’t long before daylight started creeping in and we could see that the sky was clearing…so much for the deluge!

While this race is billed as a trail ultra, we were running on asphalt for a long ways, which then turned into dirt road. No single-track, and it was definitely a road open to traffic, not a fire road or a course that you would normally associate with a trail run. I definitely would have worn road shoes for this course had I known. There were some extremely muddy sections due to the overnight rain, a clay that stuck to the shoes in what felt like 10 pound chunks, but that was going to happen regardless of shoe type.

The scenery on course was spectacular, sweeping vistas of the ocean, waves crashing into rugged shoreline, small alcoves with homes, ships on the water. The course profile claimed a net gain of 6,000 feet, but possibly due to weather related course changes I only clocked 4,000 feet of vertical. After topping out on the backside of the course I thought I had a chance to do a little racing on the final 3 mile downhill into Avalon with a guy that had been passing me back and forth most of the day. Unfortunately he had an extra gear in his stride handy, and he buried me on the first stretch, so I settled into a good pace and enjoyed the views heading into town. Andi was there to greet me, and I felt pretty good about my finishing time and the day that I was fortunate enough to have, with the rain holding off for the entire time. Not 10 minutes after I finished the skies opened up and everyone got the drenching that we expected…except for me as I was already tucking into a celebratory Guinness while cheering the runners in and waiting for my other friends still on the course.

All in all a great small event, well organized, less than 300 runners and a fun visit to a really cool place. I’ll be back!

I am pretty sure that the only New Year’s resolution that I have ever truly kept was when I resolved never to make another New Year’s resolution. I can unequivocally say that I have kept that one! I am not a believer in resolutions, but I am a big believer in goal setting. In his seminal work “Good to Great”, Jim Collins espoused the concept of BHAG’s (Big Hairy Audacious Goals). I am not necessarily a fan of that acronym, but I am a huge believer in setting big goals and developing a plan for achievement.

So, it’s already January 22nd. I postponed this post so that I could see whether my hypothesis was correct. So…If you made a New Year’s resolution, are you still on track or is it already in the rearview mirror?

By almost any measure, New Year’s resolutions fail…and fail fast. Statistically, only 8% of those that make a change commitment at New Years are successful. So, what happens with the 92% that fail?

Without genuine internal commitment to change, few have the discipline to adopt a strict change regimen for the 21-60 days that it takes for a behavior change to become a habit. So, the start of a new calendar year often isn’t enough of a catalyst to be completely determined to make a meaningful life change. If it is truly a change that you want to make, why wait until the new calendar shows up? Having resolve and making resolutions are two very different things.

There are few other ways that can help you get into that exclusive 8% club.

One of the ways that people set themselves up for failure is by trying to tackle too many things at once. Let’s face it…change is hard. Trying to make one significant life change at a time is difficult to manage, so trying to make several or many almost guarantees failure. Choose one, maybe two really meaningful changes that you are genuinely committed too, and then move to step 2…the plan.

Going into a change without a plan adds substantial risk to success. I focus on two things that I know must be addressed in my plan, which I call “triggers” and “barriers”. Triggers are situations and behaviors that you know will imperil your goal. Trying to quit smoking, but you know that if you go to the bar with your friends that smoke will trigger your old behavior? Have a plan to avoid that situation. Barriers are the things that prevent you from achieving your objective. As an example, I won’t exercise if I haven’t actually put it on the calendar in a time that I know that I can honor, and I know that if I put it off until the end of the day and my run is competing with a glass of wine…let’s just say that the run doesn’t always happen.

Once you have pared down your target list, it’s time to get SMART.

Specific

Measurable

Achievable

Realistic

Time bound

These are the foundations of effective goal setting. Without employing this framework, you might have wishes, hopes, and dreams…but you don’t really have goals and tangible objectives. In his seminal work “Good to Great”, Jim Collins espoused the concept of BHAG’s (Big Hairy Audacious Goals). I am not necessarily a fan of that acronym, but I am a huge believer in setting big goals and having a SMART plan to achieve them.

The good news is that New Year’s Day is in the past, so any goals you set right now aren’t really New Year’s resolutions. They are carefully considered lifestyle changes that are achievable with the right planning, motivation, and discipline!

If it ain’t broke, fix it. And fast.

Steve Prefontaine of Oregon set a U.S. record in the 3,000-meter race on Saturday, June 26, 1972 in the Rose Festival Track Meet at Gresham, Oregon. His time was 7 minutes, 45.8 seconds.

I bet you read that headline twice, didn’t you?

It may seem backwards, but stay with me here.

Early this week I was with a Senior VP of Operations for a Fortune 200 company. I asked a few of my standard battery of questions about business operations, operational excellence and what new initiatives that were underway.

When I asked about business operations, an innocent and perhaps an overly broad question, I received a vigorous and somewhat surprising response.

Here’s what he said.

“Everything is going great! All of our operating metrics are trending up. Quality and pace are improving. Costs are dropping, particularly as we redeploy to the cloud. However, we are starting from scratch in many areas and dispensing with our traditional methodologies and recreating how we function despite solid results.”

Counter intuitive? Perhaps not.

I once heard a saying that went something like this.

“Your processes are perfectly designed to get you the results you are getting right now.”

Read that again. It is a powerful statement.

So are the results you’re getting now where you want to be? I don’t know an executive who would say yes to that question. And you shouldn’t either.

My friend has a keen grasp on the macro operating environment, e.g. beyond the horizon of his current operating responsibility set. Knowing that his competitors may leapfrog him as they adopt new technologies, keeps him on his toes. Even those competitors who may not share the same level of excellence his organization has created.

The business playing field can be tilted even more in favor of companies who innovate, ensuring they stay at the forefront of increasing business velocity. Particularly for those who do it before it becomes necessary. (Read: too late.)

To tip the scales your way in the world of ‘every company is a software company’, you must:

- Implement new principles like DevOps, Agile, and continuous improvement

- Be driven by the knowledge that what is new today will soon be old

- Prepare for (or better yet, invent!) the next set of industry changing technologies haven’t even been developed yet

So keep breaking, reinventing and stay ahead of the also-rans!

After all, where would Steve Prefontaine have been if he’d said, “I am an extraordinary runner, I think I’ll stop trying to improve now.”

I am often asked about the difference between record and playback testing approach and data-driven testing methodology. This post outlines the difference between the two, and illustrates why one of them is killing your productivity.

Record and playback testing methods were developed in the 1980’s, and were a great use of technology at the time. It allows business users and/or quality assurance testers to walk through a business process or test flow one step at a time while it records each screen, mouse click and data entry the user encounters.

The result is test cases that follow a single path through the application under test, with very specific data for that path. The user then walks through that process again to capture a different path in the process, which is required in nearly one-hundred percent of the cases.

Compared to manual testing, this was clearly an improvement and gave many organizations their first taste of automated testing.

Sounds great. So what’s the problem?

If your processes are very simple and rarely change, this could be an excellent solution. In most organizations, however, the applications that require comprehensive testing are complex applications that change frequently.

Imagine in the scenario above what would happen if a field was added to the screen that had already been recorded? Or the test path changed? Or a data-dependent operation was modified? And imagine if you had to run that test 50 different times with 50 different sets of data? You guessed it. One would have to re-record the process each time to get that single-path test case.

Two fundamental problems with this approach are:

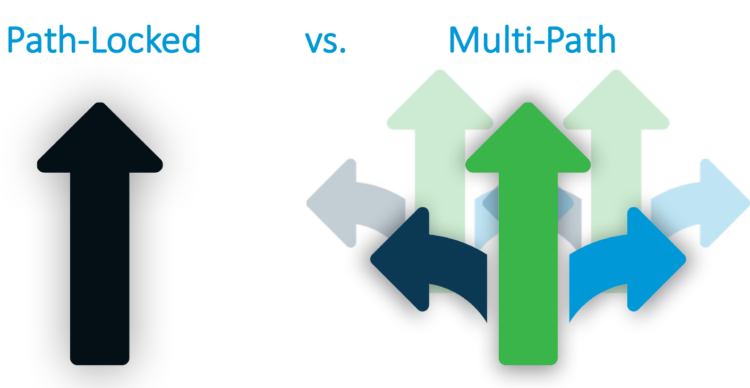

PATH-LOCKED: In record and playback, you are immediately “path-locked.” Path-locked means that the test cases created with record and playback are recordings of a single path in a business process. If one small part of that flow changes, such a new field on one of the application screens in that flow, the scripts have to be either found and edited, or completely re-record. Now consider how often your applications under test actually change. For most companies this is hundreds of times a year.

Path-locked gives a cloudy picture of your testing, at best.

This spawns a related challenge in creating a vast body of potentially useless recordings and no easy way of knowing what is valid at any given moment. Companies often end up with different people doing this work, which also means that often times the names of the files are inconsistent. This makes it harder to find the right files. Rarely do people go back and archive or dispose of outdated recordings, leaving you with a multitude of test cases and no true way of know what is valid anymore.

Unfortunately, I have seen many companies simply start over with their record and playback, scrapping the time, effort and expertise that went into creating these assets, because it is easier to start over.

TEST COVERAGE: Record and playback makes it difficult to get a handle on your test coverage. It is nearly impossible, without a lot of manual work which is what most companies are trying to get away from, to lay out the business process visually to make sure you have the right test coverage in the right areas.

Test Coverage into the breadth and depths of test coverage is crucial in ensuring defects are found. Ensuring adequate test coverage is even more important in highly regulated industries, in companies that rely on their applications under test to run their businesses, and in organizations that require a high degree of accuracy. In most cases, that’s every medium to large business out there.

What is data-driven testing?

Data-driven testing, sometimes known as keywords-based testing, is a testing method that is driven by the data.

For example, when using TurnKey’s cFactory for you automated test creation and maintenance,  you’d click the “learn” button on every screen as you walk through the process. Test components are automatically built for everything that can be interacted with by the user that appears on the screen. This includes check boxes, data verification, order of operation, click buttons, and more.

you’d click the “learn” button on every screen as you walk through the process. Test components are automatically built for everything that can be interacted with by the user that appears on the screen. This includes check boxes, data verification, order of operation, click buttons, and more.

After having walked through your process, an Excel datasheet is automatically created

showing every single component field in the process, allowing you to drive any combination of data through your test. For each component, there is a screen shot attached so you know exactly where you are in the application.

Once the process has been “learned”, you can now execute multiple scenarios through the business components, and multiple data scenarios at the test case level. This is the essence of data-driven testing.

Data-driven component-based testing has enormous cost-saving benefits, including:

- 90% increase in test coverage: companies have seen a 90% increase in their test coverage simply by having cFactory automatically create their test cases.

- Test cycles reduced from months to days: Almac went from a 3-month to a 3-day test cycles with automated maintenance– a patented process by which cFactory detects changes in your application and automatically updates your test cases.

- No programming required: the user interface is designed for non-technical users (most often the people who are closest to the application under test).

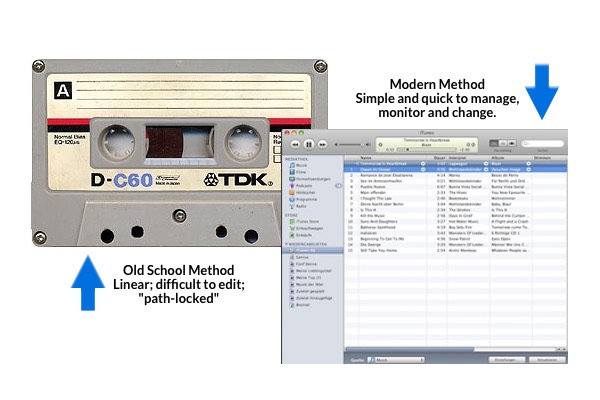

When people ask me about the difference between record and playback, or data-driven testing technologies, I sometimes think about the difference between the linear, fixed (path-locked) cassette tape recordings and the decidedly non-linear world of digital music. It’s kind of like that.

If you found this blog useful, check out these:

The Classic Achilles Heel in Test Automation

One of the classic challenges in testing, and the Achilles heel in many a test automation program is the difficulty in keeping test cases current as changes are made to the applications under test. While some applications remain reasonably static, there are others that are much more change-centric and often extremely complex.

One of the classic challenges in testing, and the Achilles heel in many a test automation program is the difficulty in keeping test cases current as changes are made to the applications under test. While some applications remain reasonably static, there are others that are much more change-centric and often extremely complex.

As the pace and velocity of business accelerates, this change-centricity increases, putting more pressure than ever on application owners and QA professionals to test faster/test better.

Often the fallout of this pressure to test faster creates unintended deterioration in the test automation assets. This happens quickly and generally results in redevelopment of the test assets, as it is often easier to do so than to unravel the changes that must be made to a test case to make it useable again.

Hardly what most of us would call intelligent automation!

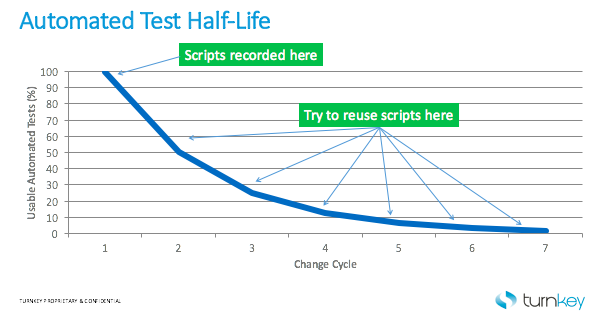

Test Case Half-Life

This chart illustrates the natural degeneration of test cases through successive application changes:

Patented Solution: Evergreen AutomationTM by TurnKey

TurnKey was recently awarded a patent for our innovative application-aware capability that we affectionately call “maintenance mode,” a capability that solves the test case maintenance issue for good.

By detecting the changes in the application when they differ from the test component in use, graphically showing the changes and then automatically updating not only every test case affected, but also the data management assets associated with the test cases and test sets, we create an “Evergreen Automation” process designed to keep test assets and your as-built application in sync at all times.

This is why we call it “Evergreen Automation.” It allows you to keep your test assets fresh and up to date at all times. We do this for all of your applications, including those mission critical enterprise applications that run the business.

Our patented, application-aware capability can have a profound effect on the value of test automation, eliminating the extraordinary, largely manual process of test maintenance. This allows users to focus their energies on what really delivers value; more frequent test cycles, broader and deeper testing, more rapid deployments and ultimately big improvements in application quality.

Additional Resources:

Recently an article appeared on the Computing UK website entitled, “Oracle attackers ‘possibly got unlimited control over credit cards’ on US retail systems, warns ERPScan.” The article talks about exposure to potentially every credit card used in US retail as a result of the control hackers gained to Point of Sale (PoS) systems when exploiting a vulnerability.

Recently an article appeared on the Computing UK website entitled, “Oracle attackers ‘possibly got unlimited control over credit cards’ on US retail systems, warns ERPScan.” The article talks about exposure to potentially every credit card used in US retail as a result of the control hackers gained to Point of Sale (PoS) systems when exploiting a vulnerability.

This isn’t really new news; we have all become somewhat numb to the stream of stories of account information being compromised from these incursions. The security software industry is focused on prevention of these data breaches and enormous amounts of time and money are spent to secure corporate systems.

What does a PoS breach have to do with application testing?

Great question…glad that you asked.

No one would deliberately ignore application of these security updates if there weren’t significant operational barriers to doing so.

So, why is it so common to have substantial delays in applying the latest updates and patches?

Enterprise applications present numerous unique challenges to timely application of patches, updates, support packs, etc. Just a few that impact an organizations ability to deploy updates on a timely basis:

Integrated Solutions – Enterprise applications are typically tightly integrated solutions, with many functional modules. A change made to one area of the application often affects other parts of the application. These metadata based applications must be thoroughly tested to ensure that changes and updates to the application do not have unintended functional consequences in other parts of the application.

Highly Customized – Virtually every company modifies the software provided by the vendor to reflect the unique nature of their business. It is more common than not to have an application like SAP or Oracle EBS be 30% or more customized, which of course means that the vendor providing the patch or support pack cannot tell you what the impact on your system will be. It’s up to the user to validate that the application functionally works as expected, in its entirety. Customers are also concerned that any update by the provided by the vendor could negatively impact customization they have applied to the application causing even more re-work and business process validation. Which leads me to my final point.

Mission Critical – These applications are often the heart of the enterprise. These applications run finance, HR, sales, supply chain, distribution…virtually every function of the business. A production outage, even briefly, can have enormous consequence and impact. Therefore, and rightly so, it makes sense to proceed with extreme caution before introducing any change into the production system.

Because the process of fully testing the applications is essential, but lengthy and resource intensive, it isn’t unusual for these patches and support packs to remain in queue to be combined with a broader set of changes so that the testing process can be done all at once.

Because the process of fully testing the applications is essential, but lengthy and resource intensive, it isn’t unusual for these patches and support packs to remain in queue to be combined with a broader set of changes so that the testing process can be done all at once.

The risk of leaving the systems vulnerable is balanced against the business risk of impacting production systems, as well as the time, cost and complexity of actually validating applications. This is where effective business process validation software products can shrink this gap and eliminate the trade-offs inherent in the standard decision process.

TurnKey Solutions specializes in tackling the hardest problems – end to end, cross platform business process validation of the most complex and important applications in an enterprise. We can help shield companies from security risks as well as providing greater visibility, control and business agility to application owners.

photo credit: Week 36 – Cyber attack via photopin (license)

Hi, I'm Daniel Gannon. Technology executive, entrepreneur, business strategist, traveler and budding guitarist. This blog is all about sharing ideas on business and adventure. Enjoy!

Hi, I'm Daniel Gannon. Technology executive, entrepreneur, business strategist, traveler and budding guitarist. This blog is all about sharing ideas on business and adventure. Enjoy!